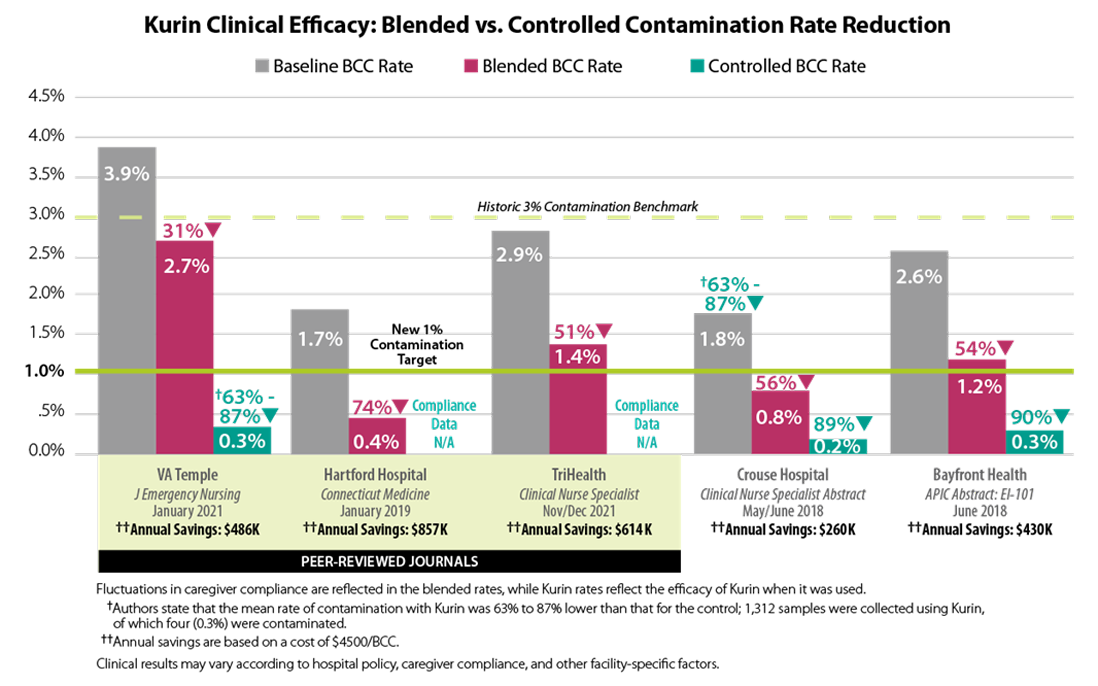

Clinical Evidence

How to evaluate Clinical Studies of BCC Technology

Three factors influence the efficacy of technology designed to reduce blood culture contamination (BCC) rates:

- Sample Definition – Does it include all cultures drawn over a period or only those drawn WITH the device?

- Method Inclusion – Does it account for cultures drawn by all methods (venipuncture, syringe draws, and peripheral IV catheter)?

- Environmental Control – Was the use of the technology heavily policed or were clinicians acting autonomously in using the device?